In the current era of data science, the development of powerful deep learning models has been made possible by the emergence of sophisticated algorithms and machine learning frameworks. However, the efficiency and scalability of the deep learning pipeline is not only based on these frameworks but also reliant on the process of deployment and maintenance called MLOps. Essentially, MLOps implements DevOps principles to machine learning pipelines to ensure reliable, scalable, and automated workflows. In this post, we will discuss the specific challenges of implementing MLOps for deep learning and provide best practices and tools for building a scalable and efficient deep learning pipeline.

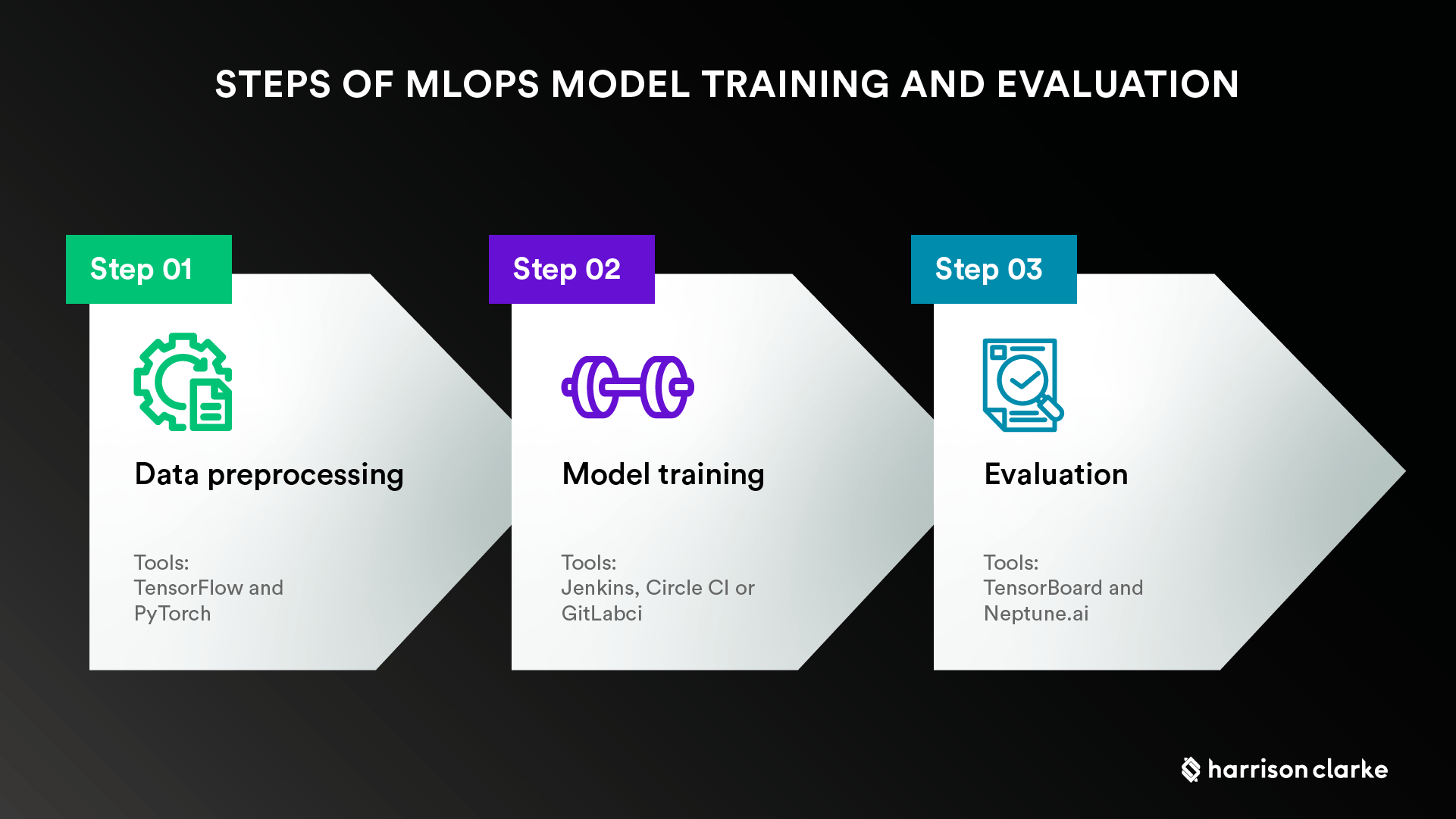

Model Training and Evaluation

In MLOps, the development of a deep learning pipeline begins with data preprocessing, model training, and evaluation. When dealing with data discovery and model exploration, there is a need to choose efficient and scalable frameworks like TensorFlow and PyTorch. Additionally, it is important to automate the model training process using CI/CD tools like Jenkins, Circle CI or GitLab CI for consistency and faster feedback. Finally, the trained models should be evaluated using automated tools that provide relevant metrics like accuracy, F1-score, and loss functions. Tools like TensorBoard and Neptune.ai provide easy-to-use and effective visualization features for model visualization and evaluation.

Model Deployment

Model deployment is a critical step in a deep learning pipeline since it is where the models are tested and delivered for production. For instance, deep learning models in computer vision applications require GPUs and optimized libraries like CUDA for efficient and faster production. In this step, MLOps provides various tools and frameworks like Kubernetes and Docker for creating an efficient deployment environment. The use of containerized environments ensures that models are delivered to the production environment with consistent dependencies and configurations.

Model Monitoring and Improvement

After deploying machine learning models to production, you must monitor the model's performance to detect any drift patterns that may require tuning. Often, in deep learning models, monitoring is done based on inputs, outputs, and behavior patterns using tools like DataDog or Splunk. By getting insights from production-level monitoring, MLOps experts can make decisions about continuous deployment or release, and also deploy newly created models with newly generated data. TensorFlow, PyTorch, and other popular deep learning frameworks have built-in support for model monitoring, which provides ML engineers and experts with clear insights into the model's performance.

Continuous Integration and Deployment (CI/CD)

Developing deep-learning applications that meet real-world requirements is more than model training and production release, including testing, pre-production staging, and post-production monitoring. CI/CD pipelines deliver a predictable and efficient environment for continuous testing, building and deployment by automating code changes and ensuring that updates are pushed only after being tested and reviewed by the developer team. Effective CI/CD pipelines should be designed to allow for fast feedback cycles and provide scalability.

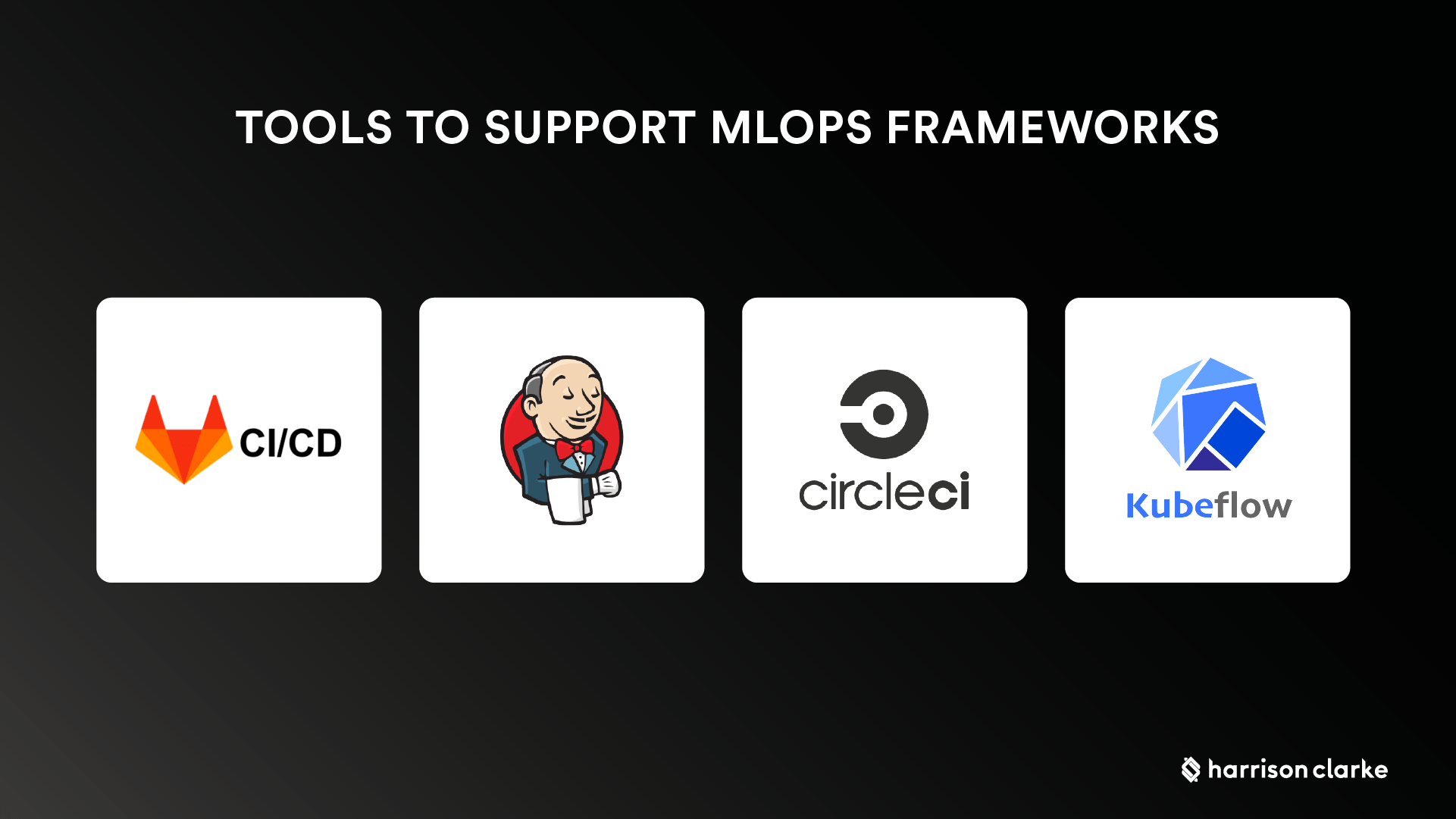

MLOps Tools

There are various tools available to support MLOps frameworks, and selection often depends on the adoption of current development environments. For instance, popular tools may include Jenkins, Circle CI or GitLab CI, where new deep learning models may go through tests that ensure they remain consistent and scalable in a production grade environment. Other interesting and useful MLOps tools that are available include Kubeflow for managing machine learning workflows and Spiceworks for remote infrastructure deployment, among others.

In conclusion, the implementation of MLOps for deep learning models is an essential part of the machine learning development process that requires specific planning, tools, and best practices. To ensure a scalable, efficient and reliable deep learning pipeline, engineers must take into consideration all stages from model development to deployment and eventually, the maintenance of the models. Through the use of automation, containerization, and CI/CD pipelines, it is possible to create a DevOps-style production-ready environment, resulting in efficient and reliable machine learning. By following these best practices and using the right tools, teams can achieve more with MLOps for deep learning models in production.